Fundamentalist Christians Lash Out as Numbers Dwindle

March 2, 2018 in Pantheism News

Recent comments made by Christian conservatives appear to blame non-believers for everything from school shootings to the downfall of civilization. As younger Americans increasingly identify as “post-Christian”, the Far Right is ratcheting up their attacks on those they consider godless and immoral.

Referring to the school shootings in Parkland, Florida during a March 1st Oklahoma Senate invocation, pastor Bill Ledbetter of the Fairview Baptist Church asked senators, “Do we really believe we can create immorality in our laws, do we really believe that we can redefine marriage from the word of God to something in our own mind, and there not be a response?”

Ledbetter added, “What if this is God’s way of letting us know he doesn’t approve of our behavior?” Of course, this is not the first time that members of the Far Right have cast blame for tragedies like the Parkland shootings on those who do not share their views of God: televangelist Pat Robertson has a long history of attributing everything from the last stock market dive to tornadoes on those who teach evolution, believe in reproductive rights, or support marriage equality.

But now, even politicians are making similar, inflammatory remarks. In a February 17th speech to the Ave Maria School of Law, Former House Speaker and perennial presidential candidate Newt Gingrich stated, “The rise of a secular, atheist philosophy” in the West is “an equally or even more dangerous threat” to Christianity than Muslim terrorist organizations that will kill Christians if “they don’t submit.” In claiming there are “two horrendous wars underway against Christianity,” (Muslim and Atheist) and that “secular philosophy dominates universities and is embraced by newspaper editors and Hollywood”, Gingrich seems to be equating religious terrorism with the simple act of non-belief.

In a March 2, 2018 Politico article, a newly unearthed series of Oklahoma talk radio shows from 2005 has revealed that the current Environmental Protection Agency Administrator, Scott Pruitt, dismissed evolution as an unproven theory, and lamented that “minority religions” were pushing Christianity out of “the public square.” He also advocated for an amendment to the Constitution to ban abortion, prohibit same-sex marriage and protect the Pledge of Allegiance and the Ten Commandments.

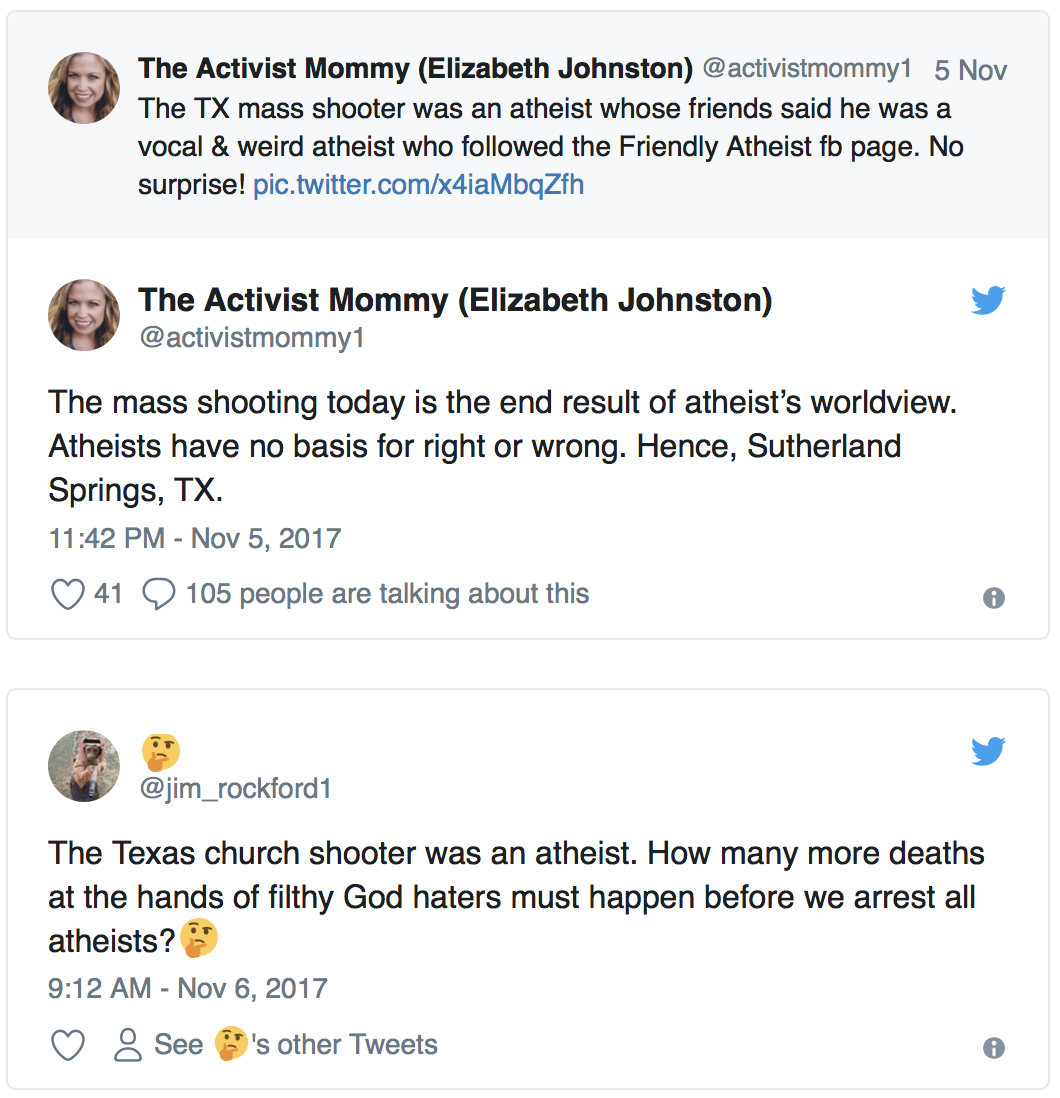

Nor is the fighting rhetoric limited to political and religious leaders. After it was initially reported that Devin Patrick Kelley, the shooter in the Texas Baptist Church massacre had “liked” the Friendly Atheist, a Facebook page for non-believers, Christian social media users were quick to place the blame on godlessness:

Such incendiary rhetoric may be a reflexive reaction to the dwindling numbers of those who share their ideology. A January 24, 2018 study conducted by the Barna Group shows the percentage of Generation Z (people born between 1999 and 2015) who identify as atheist is double that of the general U.S. adult population. Conducted in partnership with the Impact 360 Institute, the study found that 6 percent of the adult population identifies as atheist, compared to 13 percent of these teens.

Baylor sociology professor Dr. Paul Froese, a specialist in the sociology of religion, culture and politics, said there are many variables encouraging this trend, but citied a few factors as the most likely cause: First, younger generations are normally less religious than their older counterparts. Froese also cites the pace of modern society: he believes we are becoming too busy for organized religion. “One thing that it often comes down to is that there is less time to be religious in the modern world…With all kinds of activities and groups to belong to and internet to search, people are finding that they don’t have the time or they don’t think it’s important to put the time into organized religion,” Froese said.

Others attribute the decrease in youthful religiosity to the increase in answers available through technology. Where once God was the sole resource for answers to medical and other hardships, science now provides solutions. An increase in public discourse and academic reports on atheism may also be a factor in the diminished religious affiliation of younger generations.

But the decline is not exclusive to Generation Z. According to the Public Religion Research Institute (PRRI), a polling organization based in Washington, D.C., white Christians, who were once predominant in the country’s religious life, now comprise only 43 percent of the American population. Those who identify as white evangelicals are an even smaller group, having shrunk from about 23% of the population a decade ago, to just 17% today. The study goes on to say that nearly a quarter of all Americans now do not identify with any faith group.

Whatever the cause for these declines, the Christian Right is treating it as a four-alarm fire. Newt Gingrich claims that if “radical secularists” take over the government, they will “impose radical values” on everyone. But as history teaches us over and over, the true radical is born of threatening circumstances. Recent news shows that dwindling numbers of “true believers” can lead to some very radical responses.

Inducing VR “Hallucinations” to Study Consciousness

November 27, 2017 in Pantheism News

Researchers at the Sussex University’s Sackler Centre for Consciousness Science in England have created a virtual reality experience that mimics the visual hallucinations induced by LSD or Psilocybin.

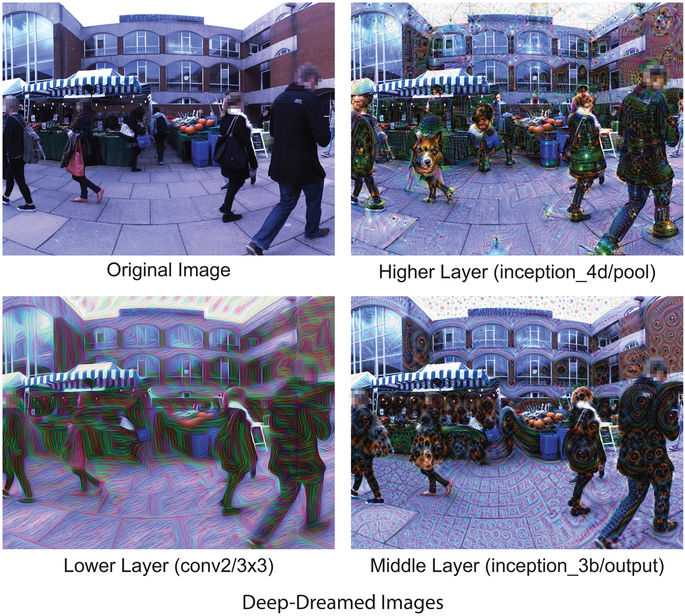

The “Hallucination Machine”, as it is known, consists of a VR headset connected to Google’s DeepDream neural network, a computer vision program that uses algorithms to deliberately over-process images. The combination produces a dream-like scenario for the participants, allowing the scientists to study how the brain processes the world. Unlike studies investigating drug-induced altered states of consciousness, the Sackler Centre researchers are manipulating just one aspect of those states—the visual state—to isolate the effect on human brains.

Twelve volunteers for the experiment described having visual hallucinations similar to those reported after taking psychedelics. The ‘trip’ was visually likened to that of psilocybin, the active ingredient in magic mushrooms, according to a November 22, 2017 article in Scientific Reports.

The Sackler report is one of a growing number on Altered States of Consciousness (ASCs). Once a legitimate realm of university study championed by hallucinogen enthusiasts like Timothy Leary and Terence McKenna, ASC studies fell out of favor during the Nixon era, and have remained largely taboo since then. This began to change in 2010, however, when researchers from around the world gathered in San Jose, California, for the largest conference on psychedelic science held in the United States in over four decades. The overarching theme of all these new studies is an attempt to understand the neural underpinnings that cause ASC, and to develop potential psychotherapeutic applications from the research.

While similar in focus, the Sackler research is unique in attempting to emulate just one portion of the psychedelic experience, using technology instead of drugs. As Anil Seth, one of the leading researchers of the study put it, “Psychedelic compounds have many systemic physiological effects, not all of which are likely relevant to the generation of altered perceptual phenomenology. It is difficult, using pharmacological manipulations alone, to distinguish the primary causes of altered phenomenology from the secondary effects of other more general aspects of neurophysiology and basic sensory processing.” Specifically, the group was able to see brain effects without the temporal dilations and distortions accompanying a hallucinogenic drug like Psilocybin. The singular focus of the “Hallucination Machine” on visual stimulation may be seen by scientists as a safer alternative to psychedelic studies.

The researchers emphasized that their technology is still in development, but added that it could be a useful stepping stone for further ASC studies. As they concluded, “Overall, the Hallucination Machine provides a powerful new tool to complement the resurgence of research into altered states of consciousness.”

Pantheism Short Documentary Wins Audience Award

November 4, 2017 in Pantheism News

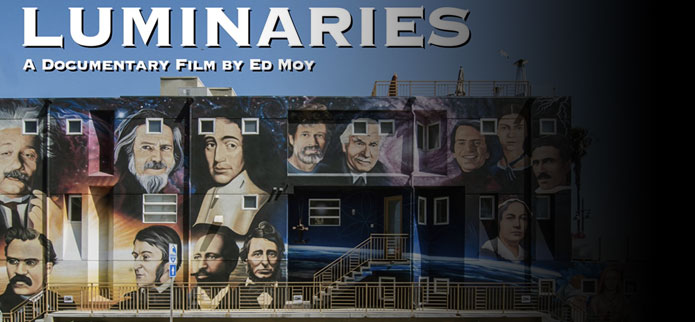

A new documentary about the “Luminaries of Pantheism” mural in Venice Beach, California has won the “Audience Award” at the Marina Del Rey Film Festival. The annual festival screened over 150 films between October 4 -8, 2017, and held its Awards Ceremony on October 9, 2017.

Located in the Los Angeles area, The Marina Del Rey Film Festival fosters up and coming filmmakers throughout the entertainment capital’s region. Directed and produced by documentarian Ed Moy, the winning short film, simply titled “Luminaries,” showcases the creation and historical background of one of the most popular murals in the Los Angeles area.

The subject of the documentary is at the end of South Venice Boulevard where it meets Venice Beach. The mural spans the entire length of a large building, which serves as the headquarters of the philosophical organization, The Paradise Project. Facing the famous Venice Boardwalk, it is visible to more than a million visitors each year. The painting nearly always has a camera-ready crowd gathered in front of it, with #luminariesofpantheism tagged photos appearing frequently on social media sites. The site is also recognized by Google Maps as a Public Art tourist destination.

“Luminaries of Pantheism” features 16 famous philosophers, scientists and poets who believe that ‘Everything is connected, and everything is divine.” It was conceived by The Paradise Project chairman, Perry Rod. A contest was subsequently held to choose the winning design, which was created by local artist Peter Moriarty. Finally, the mural was painted over a two- month period in early 2015 by the international famed muralist, Levi Ponce. Each of these contributors have prominent roles in the film, along with The Paradise Project board members Nika Avila and Chuck Beebe.

Covering both the making of the mural and some of the story behind its famous “luminaries,” the documentary was a hit with festival-goers. This is the second win for director Ed Moy, who won for best documentary in 2016 for the film, “Aviatrix: The Katherine Sui Fun Cheung Story.” Plans are in the works for an extended version of the film, which will cover more of the subjects featured within the mural.

- See the short form of the documentary here

Pew Study Shows Marked Increase in ‘Spiritual But Not Religious’

October 5, 2017 in Pantheism News

A recent Pew Research Center study showed that Americans are growing increasingly less religious, opting to identify as “Spiritual But Not Religious,” rather than maintaining a single religious ideology. In just 5 years, the percent of U.S. adults identifying as such has grown 8 percentage points, up from 19% to 27% of the population as a whole. Conversely, those who identify as ‘religious’ have dropped significantly, down 11 percent since 2012. What accounts for these changes? Are Americans simply being lazy with their faith adherence, or are they making a conscious choice to move away from religion and towards spirituality?

To some degree, the answer depends on who is asked. The Catholic website Aleteia weighs in on those who are “Spiritual But Not Religious” (SBNR) as ineffectual cherry-pickers: “Most moderns who say they have faith in everything mean to say they have faith in nothing.” They argue against selective adherence to a variety of faith traditions by using the metaphor of a paint-box: “All the colors mixed together in purity ought to make a perfect white. Mixed together on any human paint-box, they make a thing like mud, and a thing very like many new religions. Such a blend is often something much worse than any one creed taken separately…”

Another common argument against being ‘Spiritual But Not Religious’ is that it does not provide a useful ritualistic framework. SBNR critics claim humans need ritual, and that ritual exists for a reason: “…as creed and mythology produce this gross and vigorous life, so in its turn this gross and vigorous life will always produce creed and mythology.” (From “Heretics,” by Gilbert Chesterton)

Criticism of SBNR beliefs is not limited to the right, either. A recent article in Psychology Today offers, “Spirituality sometimes goes with a set of practices that may be reassuring and possibly healthy. Activities such as yoga and tai chi are good forms of exercise that make sense independent of any spiritual justification. (But) Sometimes spirituality fits with rejection of modern medicine, which despite its limitations is far more likely to cure people than weird ideas about quantum healing and ineffable mind-body interactions. Evidence-based medicine is better than fuzzy wishful thinking.”

If being ‘Spiritual But Not Religious’ leads one to a colorless existence void of necessary ritual and at risk of fuzzy thinking, why would anyone choose this self-identifier?

In an article on decreasing Millennial religiosity, one journalist proffers that capitalism is to blame: “Spirituality is what consumer capitalism does to religion. Consumer capitalism is driven by choice. You choose the things that you consume – the bands you like, the books you read, the clothes you wear – and these become part of your identity construction. Huge parts of our social interactions center on these things and advertising has told millennials, from birth, that these are things that matter, that will give you fulfillment and satisfaction. This is quite different from agricultural or industrial capitalism, where someone’s primary identity was as a producer. The millennial approach to spirituality seems to be about choosing and consuming different “religious products” – meditation, or prayer, or yoga, or a belief in heaven – rather than belonging to an organized congregation. I believe this decline in religious affiliation is directly related to the influence of consumer capitalism.”

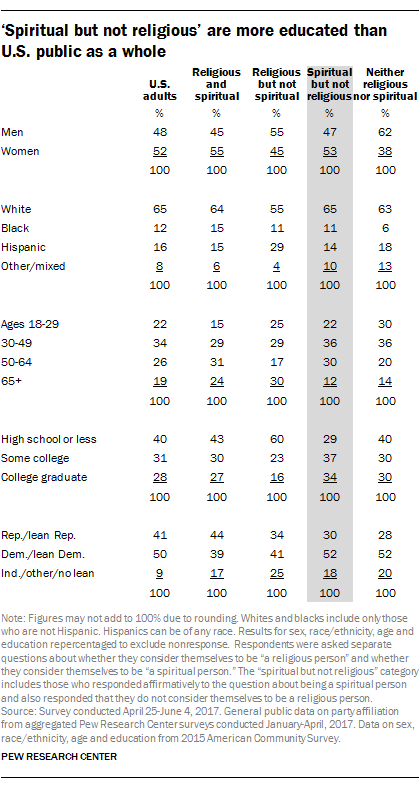

All of these reasons for the increase in spirituality over religiosity may be true. But there may be a simpler explanation: According to the aforementioned Pew Report, “Spiritual but not religious” Americans are more highly educated than the general public. Seven-in-ten (71%) have attended at least some college, including a third (34%) with college degrees.

Numerous articles have documented the challenge that the Internet represents to established authorities. Education, then (especially access to knowledge via the Internet), may be leading to increased questioning of authoritative institutions, including religious organizations. While the move towards ‘Spiritual But Not Religious’ attitudes could be caused by some form of intellectual laziness, the arguments for this are largely anecdotal. Occam’s Razor teaches us that the reasoning or hypothesis requiring the fewest assumptions is usually the right one. Given the rise in those who identify as ‘Spiritual But Not Religious’ is statistically and positively correlated to education, awareness is the most likely cause for the move away from religion.

Funerals for the Spiritual But Not Religious

September 1, 2017 in Pantheism News

For hundreds of years, particularly in the West, funerals have been officiated by religious leaders – priests, ministers, rabbis – in pious ceremonies that focused on the passing of the individual. In the last 50 years, however, a new, non-denominational official has been growing in popularity: the ‘funeral celebrant’.

The demand for these celebrants is growing in direct proportion to the rise of those who claim to be “spiritual but not religious.” Every seven years, the Pew Research Center releases a comprehensive Religious Landscape Study. In 2008, 16 percent of those polled claimed no religious affiliation. By 2015, that number had grown to 23 percent, with the drop noted across denominations, genders, generations and racial groups.

The concept of funeral celebrants is analogous in Western countries to that of civil celebrants, the non-denominational equivalent for marriages. The idea started in Australia in 1973, when then Australian Attorney-General Lionel Murphy appointed civil marriage celebrants to officiate ceremonies of substance and meaning for non-church people. At that time, there were only two options for couples who wanted to be married: a formal religious ceremony in a church or a very basic civil ceremony, which was held in a municipal office. The celebrant role was quickly established and by 2014, 74% of all Australian weddings were officiated by celebrants. From Australia, the idea spread to other areas: in the 1980s it spread to the U.K., and the in the late 1990s, to North America.

As civil marriage ceremonies became accepted, it soon followed that a similar official would be useful for secular funerals.

As of 2016, there are well over 1,000 trained funeral celebrants in North America. The USA Celebrant Foundation, established by graduates of the Australian-based International College of Celebrancy in 2003, has emerged as one of the leading organizations doing training for civil celebrants in the USA. Although originally focused on wedding and naming ceremonies only, since 2009 they have added a focus on leading funeral observances. Funeral celebrants help in planning and overseeing the funeral proceedings much as traditional clergy do. They differ primarily in that they conduct non-religious (or semi-religious) and spiritual funeral services.

There is also a different focus: the funeral service tends to be a ‘celebration of life’ intended to honor the person’s memory. This approach places greater emphasis on how the person lived their life, their personality traits and the memories of mourners, as opposed to a traditional religious service, which often encourages people to consider the afterlife and emphasizes religious ritual.

Of course, how we a deal with end of life takes many forms across the planet. In Mongolia and Tibet, Vajrayana Buddhists believe in the transmigration of spirits after death: the soul moves on, while the body becomes an empty vessel. To return it to the earth, the body is chopped into pieces and placed on a mountaintop, which exposes it to the elements — including vultures. It’s a practice that’s been done for thousands of years and, according to a recent report, about 80% of Tibetans still choose it. In Victorian England, a funeral mute was hired to stand around at funerals with a sad, pathetic face. These professional mourner (usually a woman), would shriek and wail (often while clawing her face and tearing at her clothing), to encourage others to weep. In Ghana, people aspire to be buried in coffins that represent their work or something they loved in life. These so-called “fantasy coffins” were recently popularized by Buzzfeed, which showed images of 29 outrageous ones, from a coffin shaped like a Mercedes-Benz for a businessman to an oversized fish for a fisherman.

While these ideas may seem extreme to modern Westerners, they show a diversity of ways to handle the passage of a loved one. For those who are spiritual but not religious, a funeral celebrant may be an ideal assistant.

Is Artificial Intelligence (AI) a Threat to Humanity?

August 6, 2017 in Pantheism News

This past week, Mark Zuckerberg said warnings about artificial intelligence — such as those made recently by Elon Musk – were “pretty irresponsible.” In response, Musk tweeted that Zuckerberg’s “understanding of the subject is limited.” The debate begs the question: Are we really in danger of being eclipsed by AI machines?

Stephen Hawking has hailed Artificial Intelligence (AI) as “the best, or the worst thing, to ever happen to humanity.” AI could potentially assist humanity in eradicating disease, poverty, and the damage we have done to the natural world through industrialization. On the other hand, Hawking famously told the BBC in 2014 that “artificial intelligence could spell the end of the human race.” So which is it? To get at the answer, we first must define what it is that makes us unique. By defining the things we do better than other species, we have a better idea of how another intelligence could potentially surpass us.

The most common reason cited for our evolutionary ascendency is our ability to imagine alternative futures and make deliberate choices accordingly. This foresight distinguishes us from other animals. In fact, the three primary cognitive processes of ideation (imagination, creativity and innovation), are frequently held up as the core of our uniqueness. But what if computers begin to show these characteristics? And if these cognitive abilities can be superseded, are we destined to become future pets — or worse, to be eliminated as a pest? While this situation sounds fantastical to many, we may find out if they are plausible sooner rather than later. Some futurists, including Google’s resident seer, Ray Kurzweil, predict the time when AI eclipses our own abilities is near – potentially around 2045.

Paul Allen, the co-founder of Microsoft, notably disputed Mr. Kurzweil’s timing of this supersedence – known in futurist circles as “The Singularity”. In his 2011 MIT Technology Review article, Allen claims that smart machines have a much longer way to go before they outrun human capabilities. As Allen says, “Building the complex software that would allow The Singularity to happen requires us to first have a detailed scientific understanding of how the human brain works that we can use as an architectural guide, or else create it all de novo. This means not just knowing the physical structure of the brain, but also how the brain reacts and changes, and how billions of parallel neuron interactions can result in human consciousness and original thought.” Getting this knowledge, he goes on to say, is not impossible — but its acquisition runs into what he calls the “complexity brake”: the understanding of the detailed mechanisms of human cognition requires an understanding of natural systems, which typically require more and more specialized knowledge to characterize them. This forces scientific researchers to continuously expand their theories in more and more complex ways. It is this complexity brake and the arrival of powerful new theories (rather than the Law of Accelerating Returns that Kurzweil cites as reasoning for his 2045 prediction), that will govern the pace of scientific progress required to achieve The Singularity.

The ‘complexity brake’ notwithstanding, computers have made startling advances in the six years since Allen’s article. One area which until very recently was deemed a “safe haven” for intrusion by AI was human creativity. In a fascinating June 2017 study conducted by Rutgers University’s Art and Artificial Intelligence Laboratory, 18 art judges at the prestigious Art Basel in Switzerland ended up preferring machine-created artworks to those made by humans. Although they had no idea which pieces were machine-made, the AI-generated pieces were deemed “more communicative and inspiring,” compared to those made by humans. Some of the judges even thought that the majority of works at Art Basel were generated by the programmed system. This represents a significant achievement for AI. The Rutgers computer scientists had previously developed algorithms to study artistic influence and to measure creativity in art history, but with this project, the lab’s team used the algorithms to generate entirely new artworks using a new computational system that role plays as both an artist and a critic, attempting to demonstrate creativity without any need for a human mind.

Just a month earlier (May 2017), Google’s AlphaGo algorithm defeated the world’s top Go (an ancient Chinese board game) player, Ke Jie. While AI has been beating human players at various games for many years now (Deep Blue over Kasparov in a 1997 chess match, and Watson over top Jeopardy player Ken Jennings in 2011), AlphaGo’s success is considered the most significant yet for AI due to the complexity of Go, which has an incomputable number of move options and puts a premium on human-like “intuition”, instinct and the ability to learn.

In yet another development in June of 2017, Facebook’s chatbots developed their own language to communicate with one another. The researchers at Facebook’s Artificial Intelligence Research lab were using machine learning to train their “dialog agents” to negotiate when the bot-to-bot conversation “led to divergence from human language as the agents developed their own language for negotiating.” Although the bots were immediately shut down, the occurrence points to the speed at which computers are becoming autonomous. As The Atlantic’s article on the event states, “The larger point…is that bots can be pretty decent negotiators—they even use strategies like feigning interest in something valueless, so that it can later appear to “compromise” by conceding it.” In other words, AI is learning to strategize in very human ways.

It’s clear that the field of artificial intelligence is advancing dramatically. But on the question of whether robots will eventually take over, the future is less clear. Theoretical physicist and futurist Michio Kaku quoted Rodney A. Brooks (former director of the Artificial Intelligence Lab at the Massachusetts Institute of Technology and co-founder of iRobot) on the possibility: “(It) will probably not happen, for a variety of reasons. First, no one is going to accidentally build a robot that wants to rule the world. Creating a robot that can suddenly take over is like someone accidentally building a 747 jetliner. Plus, there will be plenty of time to stop this from happening. Before someone builds a ‘super-bad robot,’ someone has to build a ‘mildly bad robot,’ and before that a ‘not-so-bad robot.’”

Of course, this assumes a globally conscientious and concordant development protocol. Google attempted to create just a such a standard with their 2016 “Five Rules for AI Safety”, modeled on Isaac Asimov’s famous “Three Laws of Robotics”. But the stakes are too high to leave the standards to the AI developers alone. As Elon Musk recently clarified during a Tesla investor conference call, “The concern is more with how people use AI. I do think there are many great benefits to AI, we just need to make sure that they are indeed benefits and we don’t do something really dumb.” Given that our science fiction leans towards the dystopian in regards to AI, (Blade Runner, A.I., The Matrix, Terminator and I, Robot, among others), we owe it to ourselves to use our hallowed ability to imagine the future as one in which we live better as a result of AI.

Poll Names Dawkins’ “The Selfish Gene” as the Most Influential Science Book of All Time

July 21, 2017 in Pantheism News

A new public poll conducted by The Royal Society reflects the influence famed evolutionary biologist and atheist Richard Dawkins has had on our modern zeitgeist. Asked to choose the most influential science book from a curated list of 11 widely-praised books, Dawkin’s 1976 tome, “The Selfish Gene,” topped the poll.

More than 1300 readers weighed in for the poll. The eleven books included in the list were chosen by The Royal Society’s Head Librarian, Keith Moore, based on “their impact on the general public as well as the science community.” Also included were “A Brief History of Time” by Stephen Hawking (Bantam), “The Immortal Life of Henrietta Lacks” by Rebecca Skloot (Pan) and “Silent Spring” by Rachel Carson (Penguin Modern Classics).

236 readers chose Dawkin’s book as the most influential (18%), followed by “A Short History of Nearly Everything” by Bill Bryson at 150 votes, and Charles Darwin’s “On the Origin of the Species” at 101 votes.

The full list of books in the poll included:

- “The Natural History of Selbourne,” by Gilbert White (OUP Oxford, 1789)

- “On the Origin of Species,” by Charles Darwin (Oxford World Classics, 1859)

- “Married Love,” by Marie Carmichael Stopes (1918)

- “The Science of Life,” by H.G. Wells, Julian Huxley and G.P. Wells (Cassel and Company 1920)

- “Silent Spring,” by Rachel Carson (Penguin Modern Classics, 1962)

- “The Selfish Gene,” by Richard Dawkins (OUP Oxford, 1976)

- “A Brief History of Time,” by Stephen Hawking (Bantam, 1988)

- “Fermat’s Last Theorem,” by Simon Singh (Fourth Estate, 1997)

- “A Short History of Nearly Everything,” by Bill Bryson (Black Swan, 2003)

- “Bad Science,” by Ben Goldacre (Harper Perrenial, 2009)

- “The Immortal Life of Henrietta Lacks,” by Rebecca Skloot (Pan, 2010)

The poll results were announced at a 30th anniversary gala at the British Library, where early editions of Darwin’s “On the Origin of Species” and Newton’s “Principia” were on display. The gala was held to recognize a milestone for The Royal Society Insight Investment Science Book Prize, which has celebrated outstanding popular science books from around the world since 1987. Open to authors of science books written for a non-specialist audience, the prize has previously championed writers such as Stephen Hawking, Jared Diamond, Stephen Jay Gould and Bill Bryson. A shortlist for this year’s Book Prize will be announced on Thursday August 3rd, with the winner being revealed during an evening ceremony on Tuesday September 19th, 2017.

Poll participants declared Dawkins’ book a “masterpiece,” and cited Dawkins as an “excellent communicator”, with many saying his book had ‘changed their perspective of the world and the way they were trained to see science.’ (Those within pantheist circles may recognize Dawkins for another of his popular books, “The God Delusion,” in which he devoted an entire chapter to pantheism, calling it “sexed-up atheism.”)

While Dawkins’ popularity should come as no surprise, the poll notably omitted many titles that are popular within the pantheistic community, including “Cosmos,” and “The Demon-Haunted World,” both by Carl Sagan. Other science-popularizing authors who did not make the list included David Deutsch, Siddhartha Mukherjee, Oliver Sacks, Richard Feynman, Hope Jahren and Thomas Kuhn. What science books do you think qualify as the “Most Influential” in your life? Readers are encouraged to comment below.

2016 Deadliest Yet for Environmental Defenders

July 13, 2017 in Pantheism News

According to a new ‘Defenders of The Earth’ report released by Global Witness, 2016 was the deadliest year yet for environmental activists. The report found that an average of almost 4 activists were killed per week, with Brazil, Colombia and the Philippines having the most murders.

Nicaragua was the most dangerous country in terms of murders per capita, with 11 environmental activists killed last year. Much of the killing there may be attributable to a 2013 agreement between the Nicaraguan government and the HKND Group, a Chinese company tasked with building a canal across the country to connect the Atlantic and Pacific Oceans. The canal is projected to force up to 120,000 indigenous people to relocate, according to the Defenders report. This mass relocation has led to protests – and to the killing of these protesters.

Referring to the Nicaraguan government’s extreme response to their protests, activist Francisca Ramírez said, “We have carried out 87 marches, demanding that they respect our rights …(but) the only response we have had is the bullet.” Ramírez, who has been threatened, assaulted, and arrested for protesting the canal, said, “They sell the image that we are against development. We are not against development, we are against injustice.”

Sadly, this injustice seems to be growing: killings were distributed across 24 countries in 2016, versus 16 in 2015. Of course, this number only includes reported murders – the actual numbers are probably far higher.

The violence towards environmental defenders has been fueled by an increasing global demand for land and natural resources. As existing resources deplete, companies in the mining, logging, hydro-electric and agricultural fields continue to expand their territories. The potential profit from this expansion, when combined with government-backed enforcement of these property “rights,” has led to increasingly disastrous consequences for those willing to oppose them. Of these sectors, opposition to mining continues to be the most dangerous, with 33 activists killed after having opposed mining and oil projects. The second most deadly sector was logging, with 23 deaths attributable to defending land from logging. Even non-activists have been at risk: 9 park rangers were killed last year in Africa, while trying to fend off poachers.

A key finding of the report is the failure of governments and business to tackle the root cause of the attacks: the imposition of extractive projects on communities without their free, prior and informed consent. Worse yet, aggressive criminalization and civil cases are being levied against the defenders. This increasingly stifles both environmental activism and land rights defense across the world, including in developed countries like the US.

The report calls for Governments, Companies and Investors to guarantee affected communities the right to make free and informed choices about how their land and resources are used. It further urges laws and policies to support and protect these environmental defenders, along with accountability for their abusers. As champions of all that is natural, it is incumbent upon our community to echo these calls.

The Mysterious “Cold Spot” in Space: Void, or Multiverse Collision Point?

May 25, 2017 in Pantheism News

Since 1964, scientists have been using microwave receivers to measure the radio hiss known formally as the Cosmic Microwave Background (CMB). The CMB is believed to be a remnant of the Big Bang. Measuring the CMB, the scientists found the universe has cooled to a surprisingly uniform 3 Kelvin (-455 ºF), following the initial heat extremes that occurred 13.8 billion years ago. At least that is what they thought until 1998, when the COBE satellite discovered there were places in the CMB both hotter and colder than that average. These variations, however, are tiny – around a millionth of a degree. Then, in 2001, even this uniformity was upset, with the discovery of a far greater anomaly: within the pattern of small hot and cold spots was one far larger and cooler called—wait for it—the ‘Cold Spot’. Recent efforts to explain this ‘Cold Spot’ have been disappointing – leading some scientists to propose something far more exotic: the area may be caused by a collision between our universe and a parallel one.

At only 150 microkelvins below average, the ‘Cold Spot’ in the CMB isn’t really all that much colder than the universal average. And despite its size and temperature, the area would still not be considered unusual were it not for the relatively hot ring around it. This has compelled many scientists to look for a simple explanation for its existence (Occam’s razor in practice). However, the anomaly has yet to fit within any known model of our universe. Until last month, the most popular explanation for the mystery had been that it was the result of a “supervoid” (Voids are areas of space filled with very few galaxies). The prevailing thought was that the ‘Cold Spot’ was simply a larger version of this phenomena. A recent study by Ruari Mackenzie and Tom Shanks of Durham University in the UK cast doubt on this idea, however. It found this region of space had only a small deficit of galaxies– not nearly enough to explain the ‘Cold Spot’ as a void. In short, the prevailing answer appears to be wrong.

Some scientists have proposed a far more controversial explanation for the ‘Cold Spot’: it is the imprint of another universe beyond our own. First claimed by cosmologist and theoretical physicist Laura Mersini-Houghton, this hypothesis basically surmises that the ‘Cold Spot’ is where our universe is bumping into another universe (more technically, it is a direct result of cosmic inflation caused by quantum entanglement). If true, this would produce an identifiable polarization signal in the ‘Cold Spot’. Data from the European Space Agency’s Planck spacecraft may prove or disprove this, but it has yet to be fully analyzed. According to Shanks, if the polarization signal is found, then a collision with another universe would “become the most plausible explanation, believe it or not.” Such a discovery would be astonishing: if multiverses can be proved, we will have to acknowledge that even our universe is not exceptional.

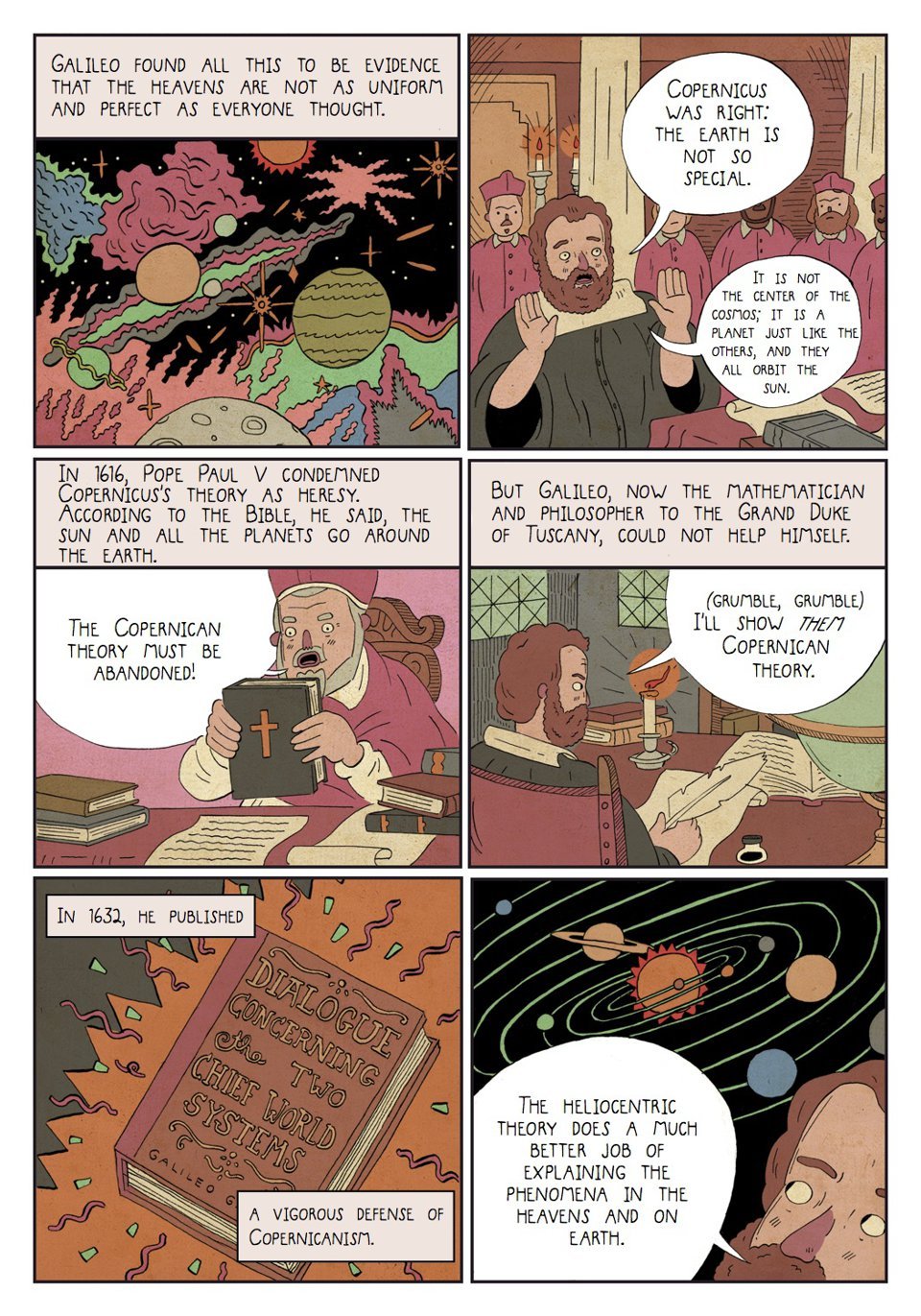

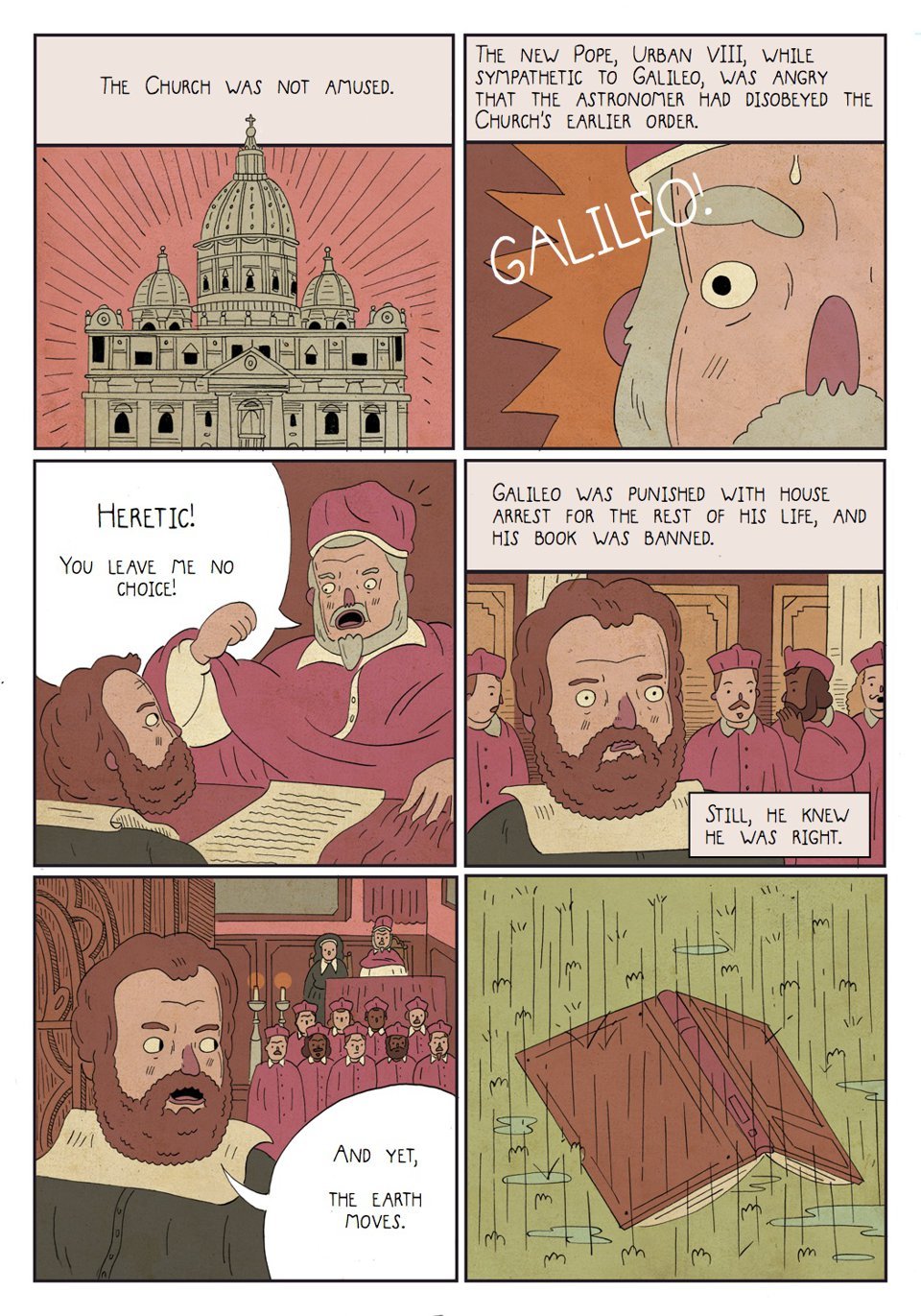

New Graphic Novel Details the Dawn of Modern Western Philosophy

May 12, 2017 in Pantheism News

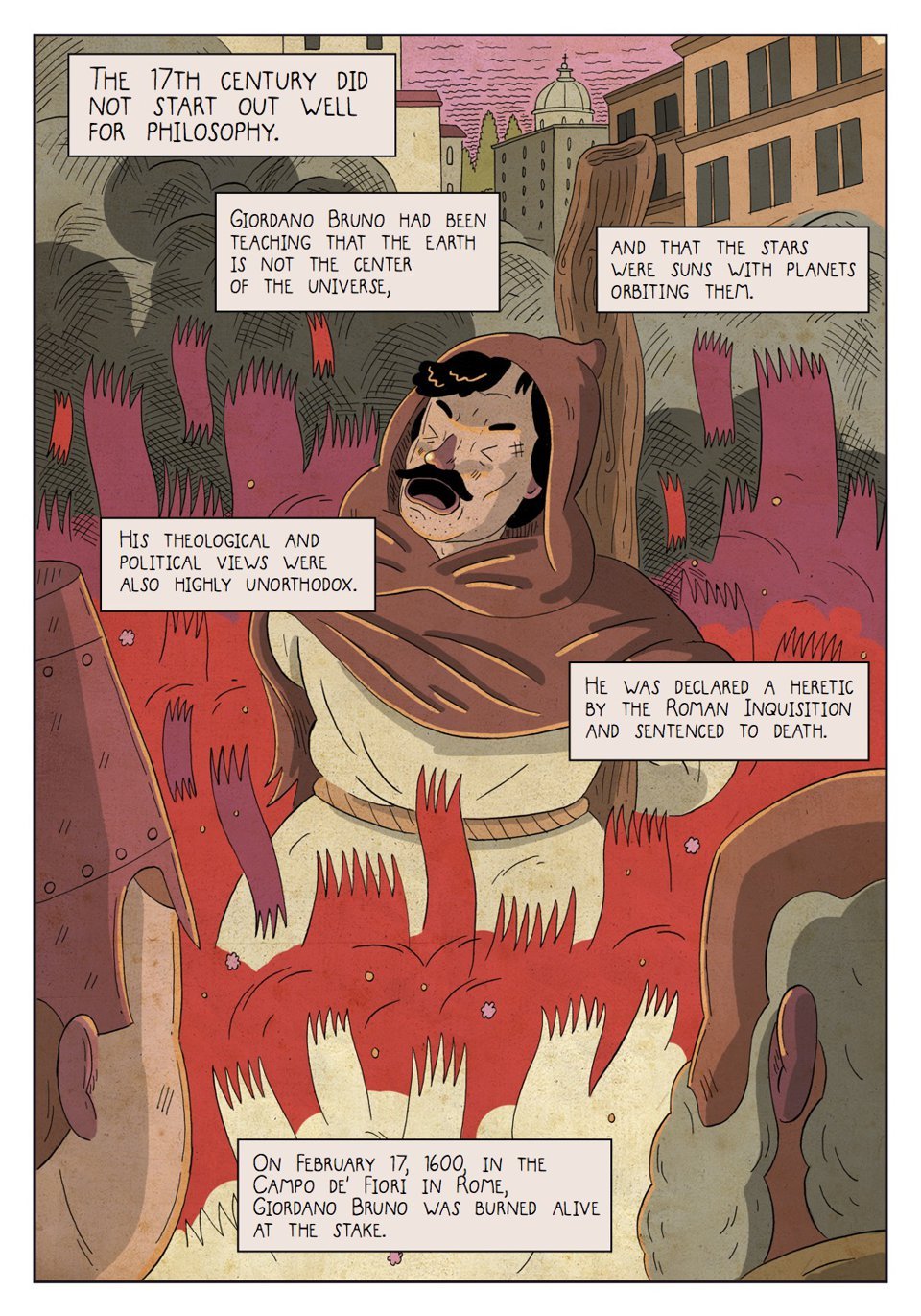

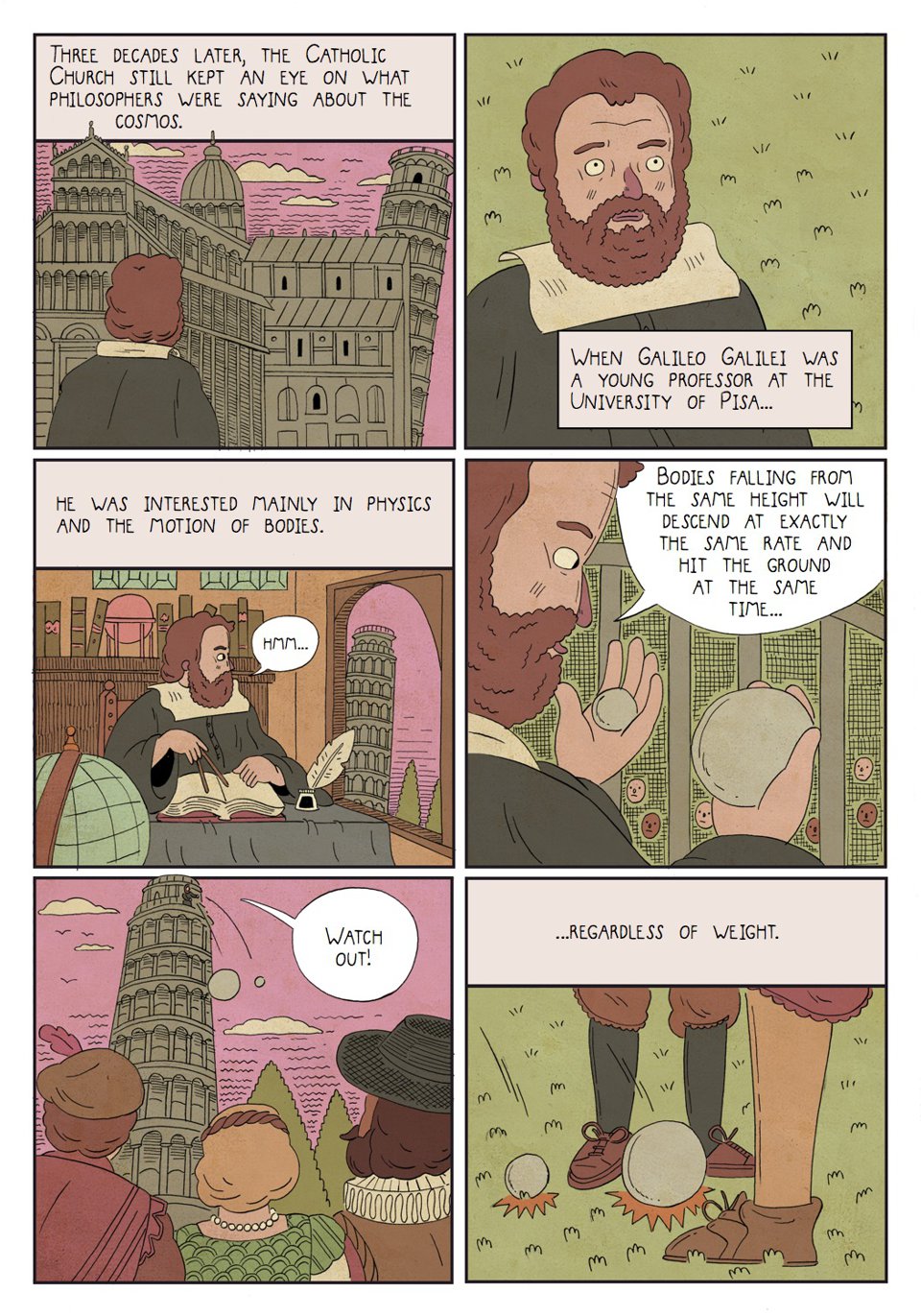

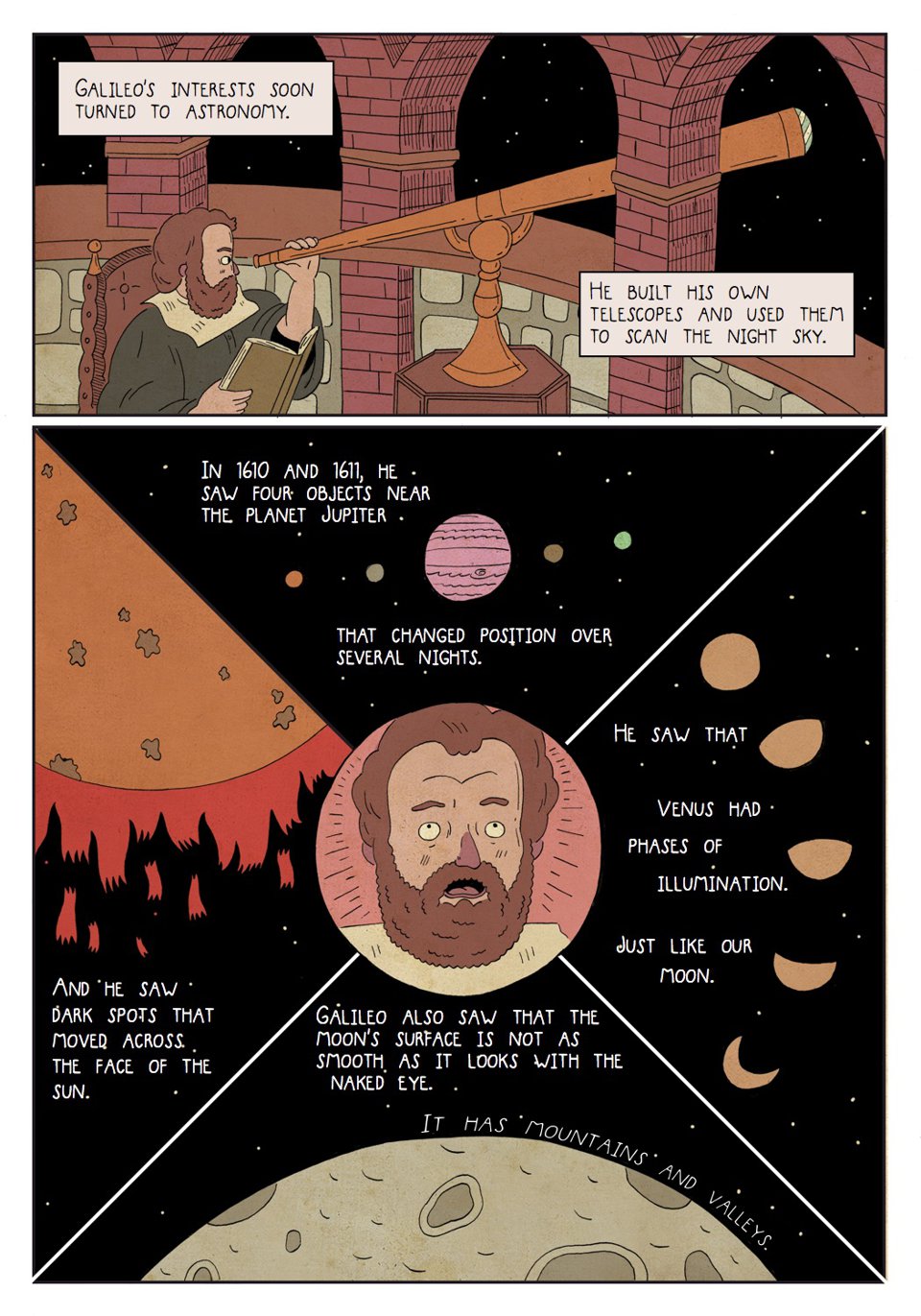

A new graphic novel, “Heretics!: The Wondrous (and Dangerous) Beginnings of Modern Philosophy,” by philosophy professor Steven Nadler and his graphic artist son, Ben Nadler, tells of the fight for science during the 17th century. The novel opens with what many consider to be the ‘patron saint’ of pantheism, Giordano Bruno, being burned at the stake for heretical views. Bruno held the (then) blasphemous view that the Earth was not the center of the universe, and the stars were suns with their own planets.

Published by Princeton University Press, the nearly 200-page book contains 173 color illustrations, illuminating the upheaval of Catholic dogma during the 1600’s. (Examples from the first chapter are below). The father/son author duo follows the controversial and challenging views of some of the Western world’s greatest thinkers, from Copernicus and Galileo through Spinoza and Newton. Set to be released June 20, 2017, the book is already the #1 best-seller in Modern Philosophy on Amazon.

The Nadler’s primary goal was to make the stories and ideas both accessible, and as engaging as possible without condescension. The novel takes some humorous liberties with historical fact (laptop computers wouldn’t be available for another 400 years), but does well to capture the intellectual triumphs of their philosopher protagonists.

Both enlightening and entertaining, the book shows how modern philosophical thought blossomed in the 17th-century, playfully but factually dealing with the nature of matter, God, mind-body dualism, the structure of society, and the existence of knowledge itself. Interspersed with quotes from the philosophers themselves, the narrative uses wit and humor to approach some of humanity’s deepest questions.

The text and illustrations deftly instruct those new to the period, while serving as a refresher for readers who have forgotten what they studied in history and philosophy. As elaborated in the pages, the philosophers of this age continued to disagree about matter and spirit, destiny, and the nature of God, but shared the belief that “the older, medieval approach to making sense of the world—with its spiritual forms and…its concern to defend Christian doctrine…no longer worked and needed to be replaced by more useful and intellectually independent models.”

Arguably the most fascinating and important period in the history of Western philosophy, the 17th-century saw dramatic changes to many people’s worldview. Concluding with the time of Isaac Newton, the Nader’s novel captures how one century upended more than a thousand years of ideas previously held as truth: Man was no longer at the center of creation, the Sun was no longer spinning around the Earth, and the church was no longer the authority on matters discoverable through scientific methods. As we face renewed challenges to science-based inquiry, the book provides a timely reminder that the freedom to be curious is worth defending.

Steven Nadler is the William H. Hay II Professor of Philosophy and Evjue-Bascom Professor in the Humanities at the University of Wisconsin–Madison. His books include Spinoza: A Life, which won the Koret Jewish Book Award, and Rembrandt’s Jews, which was a finalist for the Pulitzer Prize. He lives in Madison. Ben Nadler is a graduate of the Rhode Island School of Design and an illustrator. He lives in Chicago. Follow him on Instagram at @bennadlercomics.

A preview of some of the first chapter is below: